What is Culturally Responsive Evaluation?

Sarah M. Dunifon

In previous features, we’ve focused on participatory evaluation and utilization-focused evaluation. Today, we’ll discuss culturally responsive evaluation to conclude our series on evaluation approaches that guide our work at Improved Insights.

Culturally responsive evaluation (CRE) is an approach that centers on the fact that our work is influenced by culture and biases. While we’d like to think that evaluation is objective in nature, we’re all informed by our own lived experiences, positionality, identity, and cultural and societal inputs. In practice, this approach asks us to be aware of these influences and to build into our practice ways that we can attune to the cultures of others.

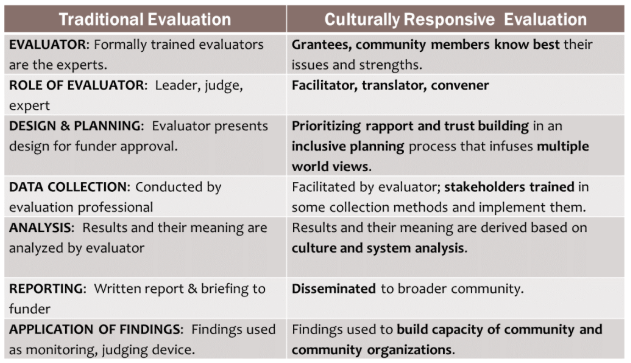

The benefits of practicing CRE are that it is more ethical, inclusive, and produces more valid works (“Culturally Responsive Evaluation,” 2015). And there are several key differences between traditional evaluation and CRE described in the following table by Cheryl Chang, author of the article “Learning with Diverse Communities Through Culturally Responsive Evaluation” (as cited in “Culturally Responsive Evaluation,” 2015).

Table 1. Cheryl Chang’s Table of the Differences between Traditional and Culturally Responsive Evaluation

First, traditional evaluation positions the evaluator as the expert who should guide and make decisions in the evaluation process, whereas CRE knows that the program managers, audiences, and community members are best suited to know what will work best for their programs. In CRE, evaluators serve as facilitators for the work, and, similar to participatory evaluation, it is crucial that many voices get involved in the process.

Second, the design and implementation of the evaluation in CRE is collaborative and seeks to disrupt the traditional power dynamics (where the evaluator works in isolation). In CRE, the project team, audience, or even community members may play a role in evaluation design and data collection.

Third, the analysis is conducted in a way that pays attention to culture and system analysis. We’re thinking bigger about our findings and outside of ourselves. Here, we might bring others into the analysis process (for us, this often means a sense-making workshop) or ask numerous and diverse voices to weigh in on their interpretation of the data.

Fourth, the reporting structure in CRE is different from that in traditional evaluation. In traditional evaluation, reports are often produced and disseminated to the funder, board of directors, or other top-level decision makers. In CRE, we widen our understanding of who is important to serve in the dissemination stage and center participants and community members as key. We might develop a webinar specifically for teen program participants, develop an additional version of the report or executive summary specifically for community members, or host a workshop to share details of the evaluation and solicit feedback.

Finally, how findings are applied can be quite different between the two approaches. In traditional evaluation, our work can be seen as a mechanism for accountability and monitoring - basically, “Is your program producing what it said it would?” In CRE, we use the evaluation to build capacity, improve programs, and focus on continuous learning and improvement.

There are many ways that you can incorporate the practices of culturally responsive evaluation into your work. Check out this material from InformalScience.org to learn more.

References:

Culturally responsive evaluation in informal STEM environments and settings.(2015, November 9). Retrieved January 30, 2026, from https://informalscience.org/culturally-responsive-evaluation-informal-stem-environments-and-settings/

We hope you enjoyed this article! If you’d like to see more content like this as it comes out, subscribe to our newsletter Insights & Opportunities. Subscribers get first access to our blog posts, as well as Improved Insights updates and our 60-Second Suggestions. Join us!